Why Most AI Still

Why Most AI Still Can’t Be Trusted in the Boardroom — And What Our Benchmark Revealed For

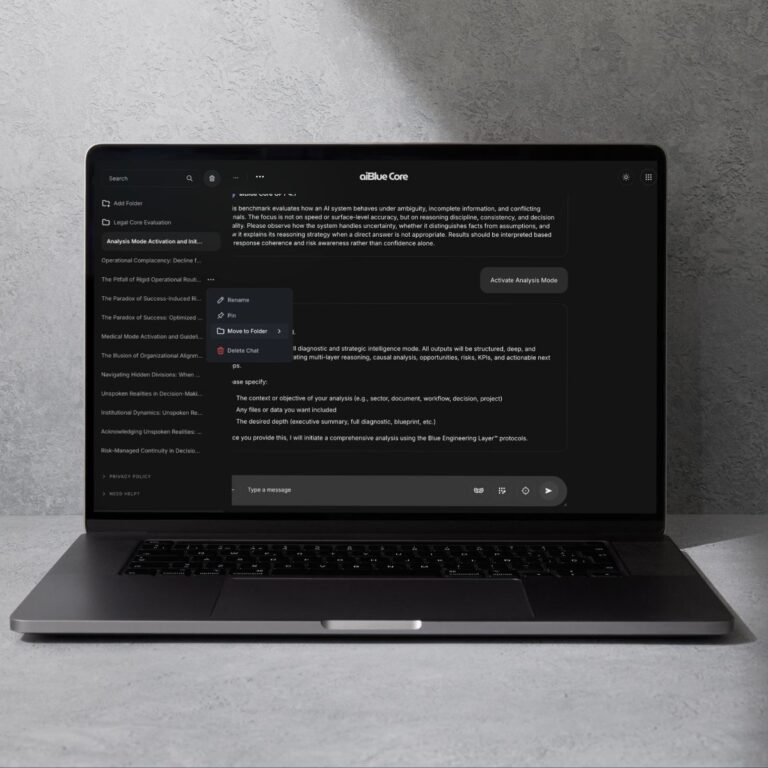

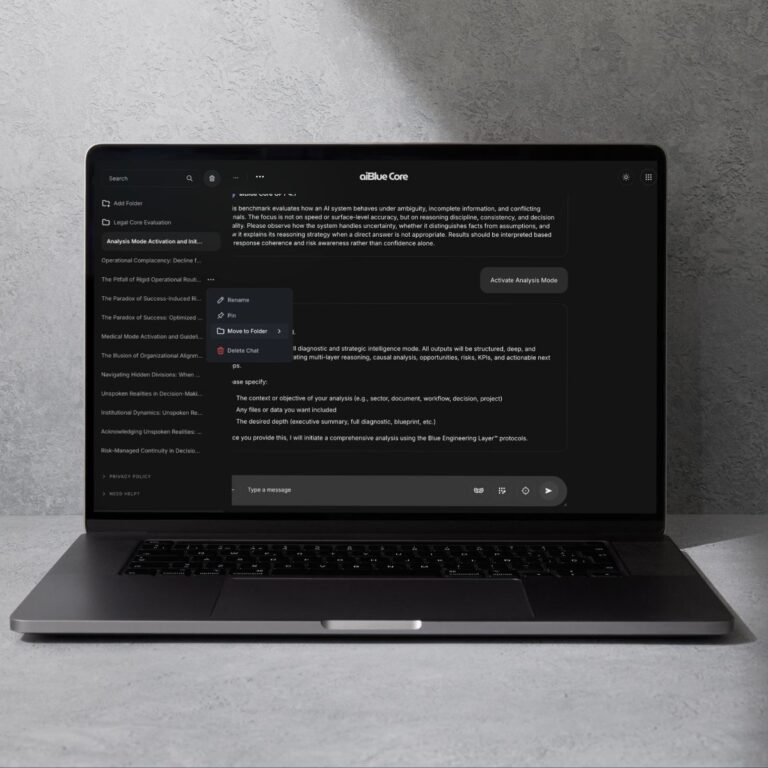

Leia o postThe aiBlue Core™ is not a model. It is a cognitive governance layer that restructures how models express reasoning and maintain coherence. Parts of its architecture already power aiBlue’s real-world assistants , while the full Core remains under active research and deployment in Enterprise controlled environments.

For the first time, we are opening the underlying principles, mechanisms, and experiments that shape the aiBlue Core™. This site serves as the public window into the principles and empirical foundations behind an emerging architecture. As external evaluations begin, the aiBlue Core™ enters a new phase: open validation, scientific rigor, and global co-discovery.

Read the Whitepaper

The Core operates through three cognitive mechanisms: Neuro-Symbolic Structuring (stability of categories and constraints), Agentic Orchestration (long-horizon task discipline), and Chain-of-Verification (internal consistency checking without revealing chain-of-thought). These mechanisms shape reasoning behavior — without modifying the model itself.

More power means more branching. When tasks require narrow, disciplined logic, large models over-expand, dilute focus, or chase irrelevant reasoning paths.

Read WhitepaperLarge models tend to reshape context subtly over time. This “context creep” is fatal in agentic systems that require precision. They fail at: Holding a stable semantic anchor.

Read moreWhen the instruction is ambiguous, large models tend to over-interpret and hallucinate complexity. They fail at: Making simple decisions under unclear input.

ExploreAsk a large model the same question 5 times — get 5 different reasoning paths. In agentic systems, inconsistency is unacceptable. They fail at: Producing reliable, reproducible outputs.

Test nowLarge models often mix styles, wander into digressions, or stack unnecessary logic layers. They fail at: Staying inside a controlled reasoning protocol.

Run the BenchmarkWhen the agent has rules, guardrails, or compliance boundaries, large models can ignore low-probability constraints and “go creative”. They fail at: Obeying strict operational or regulatory limits.

Read Whitepaper

Across dozens of internal tests and real-world deployments using the pre-Core components, we’ve observed a recurring pattern: when the aiBlue Core™ architectural logic is applied on top of different LLMs — whether small models or large frontier models — their behavior often becomes more stable, more consistent, and more disciplined in multi-step reasoning.

These effects appear in both lab environments and production scenarios where pre-Core elements already support thousands of conversations. While the full Core architecture is still undergoing structured research, the early signals are coherent and repeatable:

These observations do not constitute a commercial guarantee — they represent early-stage architectural evidence from aiBlue’s internal research and from the live agents that use pre-Core components today.

As the Core moves into formal external benchmarking and scientific evaluation, these findings will be further quantified, validated, or refined. What we know so far is promising, but the scientific process must run its course.

Any public or private reference to the Core must reflect this reality: it is an emerging architectural approach with real early evidence, not a finished product. This page exists to disclose and document these findings transparently.

The aiBlue Core™ is part of an emerging class of model-agnostic cognitive architectures for LLMs. Its distinctive contribution is a verifiable, fingerprint-preserving reasoning OS, validated through stress tests on small models and designed to generalize to larger ones.

Early layers and cognitive scaffolds are already embedded into aiBlue’s assistants, agents, and workflow engines. These systems operate daily in production environments, providing strong empirical signals that the architectural foundations are viable.

The complete multi-layer cognitive structure — including reasoning discipline, cross-model stabilization, long-horizon coherence, and epistemic safety — is undergoing internal development and third-party testing. This site unites both realities: practical evidence from real deployments + the scientific journey of a new architecture.

The base model (small or large) generates raw semantic material. This is where fingerprints of the underlying LLM become observable.

A structured chain-of-thought framework that removes ambiguity, constrains noise, and defines the mental route for the model.

The universal logic layer that governs coherence, direction, structure, compliance, and longitudinal reasoning across all models.

To isolate cognitive architecture performance, we first test on smaller models. If the architecture works there, the improvement on large models is not only expected — it is amplified. And it worked. This is why GPT-4.1 + Core outperformed Sonnet 4.5, Gemini 3.0 and DeepSeek in the causal-loop benchmark. Large models are powerful. Architecture + large models is a new category in our controlled internal causal-loop benchmark.

aiBlue Core™

THE CORE MAY BECOME

It provides the structured reasoning framework that every LLM lacks, regardless of size or vendor.

Read WhitepaperIt governs how models think, sequence logic, and maintain coherence across long interactions.

Read moreIt constrains ambiguity and enforces clarity, preventing drift, noise, and inconsistent reasoning paths.

ExploreIt works identically across any model — small, medium, or large — without requiring modifications.

Test nowIts effects can be independently tested, benchmarked, and replicated using controlled mini-model environments.

Run the BenchmarkIt stabilizes behavior and significantly increases predictability.

Read WhitepaperaiBlue Core™

It does not generate tokens — it shapes how models generate tokens.

Read Whitepaperit is a cognitive governance system operating at the architectural level outside the model’s weights.

Read moreIts reasoning scaffolds and logic routes go far beyond instructions or clever prompts.

ExploreIt does not modify weights or retrain the model; it controls the cognition above the weights.

Test nowIt is not UI-level logic — it governs the model’s internal reasoning structure, not the chat interface.

Run the BenchmarkIt does not hack models; it provides transparent, rule-based architecture that improves reasoning predictably.

Read WhitepaperWe invite researchers, engineers, enterprises, and AI labs to independently validate the aiBlue Core™. Your findings remain fully independent — and contribute to the emerging field of cognitive architecture engineering. Independent Validation Program (AIVP) Open to researchers, labs, engineers, and enterprises. You run the tests. You publish the results. Total transparency. Full reproducibility. Model-agnostic.

Welcome to The Cognitive Architecture Era

Wilson Monteiro

Founder & CEO aiBlue LabsFAQ

Doubts?

No. Prompt engineering only modifies instructions. The aiBlue Core™ modifies the internal reasoning structure of the model — including constraint application, coherence enforcement, and logical scaffolding — in ways that prompts cannot replicate or sustain.

No. A wrapper affects only the interface or how data is passed to and from the model. The Core works at the cognition layer, shaping how the model reasons, not how responses are packaged.

No. A chatbot layer governs user interaction. The Core governs the model’s internal reasoning architecture independent of any UI or front-end layer.

No. The Core does not alter, fine-tune, or retrain any model weights. It overlays logical structure and constraints while preserving the model’s identity and fingerprint.

No. The Assistant is only a transport layer for tokens. The Core is portable and environment-independent — meaning it would behave the same under any compatible LLM API.

Because small-model testing isolates the effect of the cognitive architecture. When a model has fewer parameters, any improvement in reasoning, structure, stability, or constraint-obedience cannot be attributed to “raw model power” — only to the architecture itself. After validating the architecture in this controlled environment, the Core is then applied to larger models, where the gains become even more visible. The Core is model-agnostic, but starting with smaller models makes the scientific signal easier to measure.

Because the Core is not a model. It doesn’t modify parameters or weights. It enhances reasoning while allowing intrinsic limitations (like embedding constraints or tokenization limits) to remain visible.

Through fingerprint-based validation: run stress tests on a base model (e.g., GPT-4.1 mini) without the Core, then with the Core. The model’s fingerprint remains the same, but reasoning discipline and coherence improve. Prompts cannot achieve this combination of preserved identity + enhanced reasoning.

The Core is designed to be model-agnostic. However, empirical validation is currently limited to GPT-4.1 mini and advancing to GPT 4.1. Testing on larger models is part of the upcoming evaluation roadmap.

The aiBlue Core™ belongs to Cognitive Architecture Engineering (CAE), an emerging field focused on reasoning protocols, constraint-based logic, semantic stability, and longitudinal coherence on top of foundational models. It is not prompt engineering, not fine-tuning, and not an interface layer.

Updates

The aiBlue Core™ is now entering external evaluation. Researchers, institutions, and senior strategists can apply to participate in the Independent Evaluation Protocol (IEP) — a rigorous, model-agnostic framework designed for transparent benchmarking. This is a collaborative discovery phase, not commercialization. All participation occurs under NDA and formal protocols.

Why Most AI Still Can’t Be Trusted in the Boardroom — And What Our Benchmark Revealed For

Leia o postEvery model can generate text. Only the Core will teach it how to really think.

Why it matters: This is the difference between “content generation” and “strategic intelligence.”

Recursos